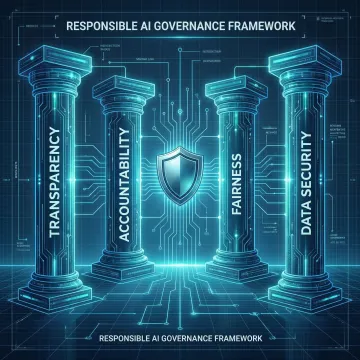

What are the 4 pillars of responsible AI?

The four pillars of responsible AI are Transparency (explainability of decisions and data usage), Accountability (ethical oversight and continuous monitoring), Fairness (bias elimination and inclusive outcomes), and Security & Privacy (robust data protection and access controls). Cybic's frameworks are built around these four principles, embedding each into AI architecture, governance policies, and operational workflows rather than treating them as compliance checkboxes.

What is the framework for responsible AI use?

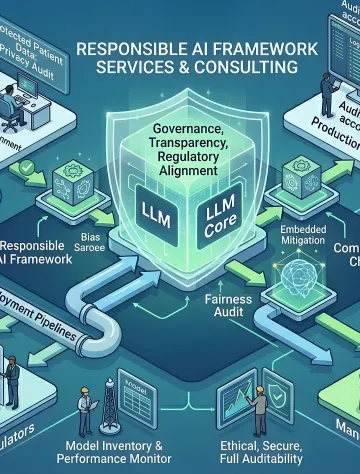

A responsible AI framework is a structured set of policies, technical controls, and governance processes that guide how AI systems are developed, deployed, monitored, and retired. It typically covers model transparency, bias auditing, regulatory compliance, data governance, access controls, and accountability mechanisms. Cybic builds enterprise-grade responsible AI frameworks tailored to your specific industry, regulatory environment, and operational context, ensuring alignment with standards like GDPR, HIPAA, and SOC 2.

What industries does Cybic serve with responsible AI consulting?

Cybic serves enterprises across healthcare, financial services, manufacturing, retail, oil and gas, and the public sector. Each industry faces unique regulatory and ethical AI demands from HIPAA-compliant clinical AI in healthcare to explainability requirements in financial decision-making. Our responsible AI frameworks are customized to address sector-specific compliance mandates, risk profiles, and governance expectations.

How does Cybic embed governance into AI systems?

Cybic incorporates governance at the architectural level and not as a post-deployment add-on. This includes role-based access controls (RBAC), encrypted data protection in transit and at rest, audit trails for all AI-driven actions, model lifecycle management, and regulatory alignment checkpoints built into CI/CD pipelines. Governance is treated as a foundational engineering requirement, not a documentation exercise.

Does Cybic train AI models on proprietary enterprise data?

No. Cybic maintains a strict policy of no model training on proprietary enterprise data. Your organization's data remains yours, it is used to power and customize AI systems but is never fed back into model training processes that could expose sensitive information. This is a core data governance commitment that protects your intellectual property and ensures regulatory compliance.

What compliance standards does Cybic's responsible AI framework support?

Cybic's responsible AI frameworks are designed to support compliance with GDPR, HIPAA, CCPA, SOC 2, ISO standards, and emerging AI-specific regulations. During the governance design phase, we map your existing AI deployments and data flows against applicable regulatory requirements, identify gaps, and implement the technical and policy controls needed to achieve and maintain compliance.

How long does it take to implement a responsible AI governance framework?

Implementation timelines depend on the complexity of your existing AI landscape, the number of systems in scope, and your regulatory environment. A foundational governance assessment and framework design typically takes four to eight weeks. Full implementation across enterprise AI systems, including policy rollout, technical controls, and team training, generally spans three to six months, with ongoing monitoring and optimization thereafter.

Can Cybic retrofit governance into existing AI deployments?

Yes. Cybic conducts thorough audits of existing AI systems to assess governance gaps, bias risks, compliance exposure, and auditability deficiencies. We then design and implement a remediation roadmap that retrofits responsible AI controls including access management, monitoring, explainability layers, and policy structures into deployed models and pipelines without requiring a complete rebuild.