Introduction

AI is no longer a pilot-stage experiment. It's embedded in enterprise workflows, automated decisions, and operational infrastructure from loan approvals and supply chain optimization to clinical diagnostics and hiring systems. Yet governance, risk, and compliance programs have struggled to keep pace with deployment speed.

In 2026, the EU AI Act moves into full enforcement, agentic AI systems proliferate across enterprises, and regulatory scrutiny intensifies across every major market. Organizations can no longer treat governance as a documentation exercise.

The gap between stated AI principles and operational enforcement is widening. Regulators are closing it with penalties, mandatory incident reporting, and direct audits of AI systems in production.

This article examines the key trends, challenges, and signals that will define AI GRC in 2026, with practical guidance for enterprises navigating an environment where the rules are being written in real time.

TL;DR

- The EU AI Act reaches full enforcement in 2026, carrying fines up to €35 million or 7% of global revenue for violations

- Agentic AI introduces accountability gaps that existing governance frameworks weren't built to handle

- Governance-by-design is replacing bolt-on compliance as the operational standard

- Third-party AI risk is a major blind spot, 76% of enterprise AI use cases now rely on external models

- Enforcing AI policies in live production systems remains the hardest problem most enterprises haven't solved

Key AI Governance, Risk & Compliance Trends Shaping 2026

EU AI Act Full Enforcement and Global Regulatory Convergence

2026 marks the EU AI Act's phased enforcement coming into full effect. The regulation introduces a risk-based classification of AI systems from minimal to unacceptable risk with severe financial consequences for non-compliance.

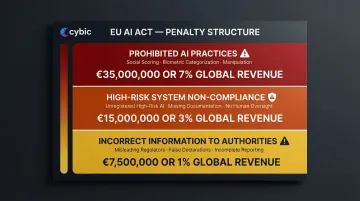

Penalty thresholds are substantial:

| Violation Type | Maximum Fine |

|---|---|

| Prohibited AI practices (e.g., social scoring, biometric categorization) | €35 million or 7% of global annual revenue, whichever is higher |

| High-risk system non-compliance | €15 million or 3% of global revenue |

| Incorrect information to authorities | €7.5 million or 1% of global revenue |

The EU AI Act isn't operating in isolation. Legislative mentions of AI rose 21.3% across 75 countries in 2024, marking a ninefold increase since 2016. Canada's AIDA proposes a risk-based approach targeting "high-impact" systems, while China's 2023 Interim Measures impose strict obligations on generative AI providers, including algorithm filing and security assessments.

Enterprises operating across regions now face multi-jurisdictional compliance complexity that demands coordinated governance strategies and not siloed compliance teams. That coordination is harder than it sounds: 72% of experts disagree that there is sufficient international alignment on AI standards.

Agentic AI Raises the Accountability Stakes

Agentic AI systems those that autonomously execute multi-step tasks, make decisions across workflows, and act without continuous human instruction introduce a new tier of governance complexity.

Enterprise adoption is accelerating rapidly. 62% of organizations are experimenting with AI agents, and 23% are actively scaling them within at least one business function. Gartner predicts that by 2026, 40% of enterprise applications will feature integrated task-specific AI agents.

The governance gaps are significant:

- Unclear accountability when agents make errors across multi-step processes

- Difficulty maintaining complete decision trails when agents chain actions across systems

- Challenges applying "human-in-the-loop" oversight at the speed agents operate

- Regulatory uncertainty about liability for autonomous decisions

Regulators are responding. The UK ICO warned in January 2026 that agentic AI exacerbates data protection risks particularly around automated decision-making and purpose creep and stressed that legal responsibility remains with the deploying organization regardless of system autonomy.

NIST has also stepped in, launching its "AI Agent Standards Initiative" to address security, identity, and governance challenges specific to autonomous systems.

Organizations deploying agentic AI without governance guardrails are accumulating significant liability.

Governance-by-Design Displaces Bolt-On Compliance

Organizations are discovering that appending governance policies to already-deployed AI systems is ineffective and operationally fragile. The data is stark: while 77% of business leaders view GenAI as a business necessity, only 21% report that their organization's AI governance maturity is "systemic or innovative".

Governance embedded at the architectural level through role-based access controls, encrypted data handling, model auditability, and traceable decision logs proves far more durable and audit-ready than policies added after deployment. Organizations conducting regular AI system assessments are 3x more likely to achieve high GenAI value compared to those that don't.

Security and compliance must be structural, not cosmetic. Cybic applies this directly: its AI platform architecture incorporates RBAC, encrypted data handling, and auditability controls at the system design level and explicitly prohibits using proprietary enterprise data to train models.

Third-Party and Supply Chain AI Risk Under the Microscope

76% of enterprise AI use cases are now purchased rather than built internally foundation models, vendor APIs, external data pipelines creating opacity around training data provenance, embedded bias, and vendor-level regulatory compliance.

Regulators are increasingly signaling that organizations cannot absolve themselves of responsibility for AI harm caused by third-party tools they've deployed.

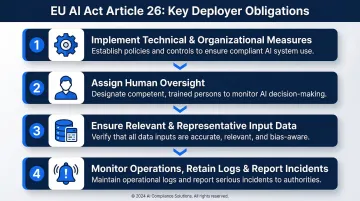

The EU AI Act places heavy obligations on "deployers":

Under Article 26, deployers of high-risk AI systems must:

- Implement appropriate technical and organizational measures aligned with provider instructions

- Assign human oversight to competent natural persons

- Ensure input data is relevant and sufficiently representative

- Monitor system operation, retain automatically generated logs, and immediately inform providers and authorities of serious incidents

Most enterprises lack visibility into the governance practices of their AI vendors. The EU AI Act and emerging procurement guidance are beginning to impose due diligence requirements on deployers, not just developers, making third-party AI vetting a compliance obligation rather than an optional best practice.

The Biggest Challenges in AI Governance and Compliance

Explainability and the Black-Box Problem

Regulators, courts, and business stakeholders demand clear, auditable records of how AI systems reached specific decisions in high-stakes domains like lending, healthcare, hiring, and law enforcement. Many currently deployed models cannot provide this.

The tension is fundamental: model performance often favors complexity, while transparency requires interpretability. Enterprises must now treat explainability as a governance requirement, not an optional engineering goal. Without decision trails and audit-ready explanations, organizations cannot demonstrate compliance with emerging transparency mandates.

Algorithmic Bias as a Continuous Operational Risk

Bias in AI is not a one-time problem to solve before launch. It surfaces over time as data distributions shift, new populations interact with systems, and business contexts evolve.

New York City's Local Law 144 exemplifies where this challenge is becoming codified into law. The regulation prohibits employers from using automated employment decision tools unless they've undergone an independent bias audit within the past year, a meaningful compliance burden for any organization using AI in hiring. A December 2025 audit by the NY State Comptroller found enforcement to be "ineffective," citing flawed complaint intake and superficial compliance reviews. The Comptroller identified 17 potential instances of non-compliance among 32 companies, signaling heightened enforcement risk.

Organizations must implement continuous bias monitoring, not deployment-time checks.

The Governance-to-Enforcement Gap

Continuous monitoring only works if governance is actually enforced and that's where most organizations fall short. They've invested in AI ethics principles, responsible AI policies, and governance documentation, but lack mechanisms to enforce these at the system level during live operations.

The numbers tell the story: only 20% of companies possess a mature model for the governance of autonomous AI agents, and 55% of organizations have an AI board, yet many cannot translate board-level principles into operational controls.

The architectural response is governance embedded directly into workflow execution. Platforms like Cybic's Drava integrate auditability, access management, and compliance frameworks at the infrastructure level so controls aren't enforced by policy documents, but by the systems themselves.

Multi-Jurisdictional Compliance Burden

Enterprises operating globally face a patchwork of AI regulations with different risk categorizations, documentation standards, and enforcement timelines. The OECD.AI Policy Observatory currently tracks AI policies across 72 countries.

Compliance teams must simultaneously reconcile requirements across:

- EU AI Act : risk-tiered obligations with strict documentation and conformity assessments

- US sector-specific rules : fragmented across finance, healthcare, and employment law

- Canada's AIDA : proposed federal framework with separate provincial considerations

- APAC regulations : diverging rapidly across Singapore, China, and Australia

This burden is outpacing current team capacities and GRC tooling at most organizations.

The AI Governance Talent Deficit

Effective AI governance requires professionals who understand both technical AI systems and regulatory compliance, a combination that is extremely scarce. 23.5% of organizations cite finding qualified AI professionals as a primary challenge, specifically the difficulty of finding talent that combines technical AI understanding with governance, risk, and compliance expertise.

This skills gap creates execution risk even when organizations have sound intentions:

- Governance frameworks go unenforced when no one has the capability to operationalize them

- Bias monitoring programs stall without engineers who can interpret model behavior in regulatory terms

- Audit documentation fails because technical teams and compliance teams speak different languages

The deficit isn't just a hiring problem, it's a structural gap between how AI systems are built and how they're governed.

What's Driving These AI GRC Trends

Regulatory Acceleration as the Primary Forcing Function

Governments worldwide have shifted from voluntary AI guidance to enforceable regulation. The EU AI Act is the clearest signal, but the broader momentum reflects a systemic shift. In the United States alone, state-level AI laws more than doubled from 49 in 2023 to 131 in 2024.

Key regulatory developments shaping enterprise AI programs:

- EU AI Act: Tiered risk classification with mandatory conformity assessments for high-risk systems

- US state laws: Sector-specific rules covering employment decisions, consumer profiling, and automated decision-making

- Global fragmentation: Diverging national frameworks forcing multinationals to manage conflicting compliance obligations simultaneously

GenAI Adoption Outpacing Governance Maturity

The barrier to deploying AI dropped dramatically with large language models and accessible GenAI tools. 88% of organizations report regular AI use in at least one business function, up from 78% in 2024.

This speed-first adoption culture left governance programs playing catch-up. The accumulated liability from ungoverned deployments is now surfacing in enforcement actions, civil litigation, and reputational damage the cases below are the visible results.

Reputational and Financial Consequences of AI Failures Becoming More Visible

High-profile incidents have made AI risk tangible at the board level:

- Rite Aid: The FTC banned the company from using facial recognition for 5 years after finding the system falsely tagged consumers (disproportionately women and people of color) as shoplifters

- iTutorGroup: The EEOC secured a $365,000 settlement after the company's recruiting software automatically rejected female applicants over 55 and male applicants over 60

- Clearview AI: The UK Upper Tribunal upheld a £7.5M fine for unlawfully scraping images of UK residents

Each case shares a pattern: systems deployed without adequate governance controls, producing discriminatory or unlawful outcomes that regulators and courts are now willing to penalize at scale. For boards and C-suites, these aren't edge cases, they're precedents.

How These Trends Are Reshaping Enterprise AI Operations

Operational Impact

AI development and deployment pipelines now require compliance checkpoints that did not previously exist like risk classification reviews, bias evaluations, training data documentation, and governance approvals. These are no longer end-of-development gates. Governance is embedded directly into MLOps and AI engineering workflows, making compliance a continuous part of the development lifecycle rather than a final review.

Business and Strategic Impact

Operational compliance demands are translating directly into strategic investment. Gartner predicts that by 2030, fragmented global AI regulations will drive a $1 billion total addressable market for AI governance platforms. Key business shifts include:

- Increased spending on AI governance tooling, dedicated governance roles, and board-level AI risk reporting

- Governance transparency becoming a vendor procurement requirement enterprises now condition partnerships on it

- The EU AI Act mandating that general-purpose AI model providers supply documentation enabling downstream compliance

Workforce and Organizational Impact

The governance gap is reshaping workforce structures. New hybrid roles like AI compliance officer, AI risk analyst, AI ethics lead are emerging, while existing legal and risk professionals are rapidly developing AI literacy to keep pace.

Organizations that bridge engineering, legal, compliance, and operations into cross-functional AI governance teams are seeing measurable results. Companies with "digitally and AI-savvy" boards outperform the industry average ROE by 10.9 percentage points , a direct return on governance investment.

Future Signals: What to Watch in 2027 and Beyond

Mandatory AI Incident Reporting Is Gaining Traction

Similar to how GDPR normalized mandatory data breach notification, regulators are beginning to discuss requirements for organizations to report AI failures, harms, or near-misses to regulatory authorities.

The EU AI Act requires providers of high-risk systems to report serious incidents within 15 days. The US Executive Order 14110 directs HHS to establish an AI safety program to track clinical errors resulting from AI in healthcare. A 2024 UK report highlighted a "critical gap" in the UK's regulatory plans regarding AI incident reporting.

Enterprises should start building incident detection and reporting infrastructure now, before these requirements are codified.

Standardized AI Auditing Is Coming

The governance landscape is moving toward formalized third-party AI audits analogous to financial audits that assess fairness, performance, documentation quality, and regulatory compliance.

ISO/IEC 42001:2023, the world's first international standard for AI Management Systems, is gaining ground quickly. BSI secured accreditation in March 2026 to deliver ISO/IEC 42001 certification services, becoming the first body globally to hold triple accreditation. BSI research indicates that 26% of organizations are already aligning with the standard.

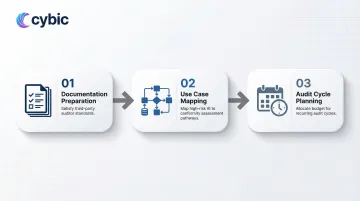

The EU AI Act adds further pressure. High-risk AI systems must undergo conformity assessments before market placement, with certain systems requiring review by a designated "notified body." Key implications for enterprise AI teams include:

- Preparing documentation that satisfies third-party auditor standards

- Mapping high-risk AI use cases to applicable conformity assessment pathways

- Allocating time and budget for recurring audit cycles as standards evolve

This will create a new professional services market and add operational requirements that AI teams should plan for now.

The Governance Maturity Divide Will Determine Competitive Position

Organizations that have embedded governance architecturally with traceable, auditable, compliant AI systems will be able to scale AI deployment with confidence as regulation tightens.

Those that have relied on documentation without enforcement mechanisms will face regulatory pressure, remediation backlogs, and slower deployment timelines as auditors arrive with harder questions.

Governance built into architecture from the start is far easier to demonstrate than governance retrofitted under deadline. Organizations that act now will have audit-ready systems when their competitors are still scrambling to catch up.

Frequently Asked Questions

What are the compliance risk trends in 2025 and 2026?

The dominant trends include EU AI Act enforcement beginning in 2026, the rise of agentic AI accountability requirements, third-party AI liability, and increased regulatory scrutiny of algorithmic bias particularly in high-stakes sectors like healthcare, finance, and hiring.

What are the topics under AI governance?

Core pillars include model risk management, algorithmic bias and fairness, data privacy and security, explainability and transparency, regulatory compliance, auditability and traceability, and human oversight mechanisms.

What are the key obligations of AI regulatory compliance?

Key obligations include risk classification of AI systems, documenting training data and model decisions, and implementing human oversight for high-risk applications. Organizations must also conduct bias audits and meet data protection standards across the full AI lifecycle.

What is the 30% rule for AI?

No authoritative regulatory provision by that name exists in the EU AI Act, NIST frameworks, or major national regulations. The term is a business productivity rule of thumb suggesting AI automate roughly 70% of routine tasks, leaving 30% for human oversight and strategic direction. It is not a legal compliance threshold.

What is the biggest challenge in AI governance?

The governance-to-enforcement gap is the most critical challenge. Organizations have policies on paper but lack the architectural mechanisms to enforce them in live production systems, so stated principles never translate into operational controls.

How does the EU AI Act impact enterprise AI compliance?

The EU AI Act classifies AI applications by risk level, imposing strict documentation, transparency, and audit requirements for high-risk systems. Penalties for non-compliance reach up to €35 million or 7% of global revenue. It applies to any organization deploying AI that interacts with EU residents, regardless of where that company is headquartered.