Introduction

Regulated industries face a real tension: AI workflow automation promises substantial efficiency gains and competitive advantage, yet frameworks like HIPAA, GDPR, SOC 2, SOX, and FedRAMP demand accountability, traceability, and security that most out-of-the-box AI tools weren't designed to provide. The financial stakes are concrete healthcare organizations faced average breach costs of $10.93 million in 2024, while financial services averaged $6.08 million per incident.

The governance gap compounds the risk. 69% of organizations suspect or have evidence that employees are using prohibited public GenAI tools, creating shadow automation that bypasses oversight entirely.

Compliance in AI automation must be an architectural decision made from day one, not a layer added after deployment. This guide covers the regulatory frameworks shaping AI in regulated sectors, the technical requirements that make compliance operationally viable, and the industry-specific priorities that separate deployments that scale from those that stall.

TLDR

- Governance must be embedded at the architecture level security, audit trails, and access controls cannot be retrofitted after AI systems are deployed

- HIPAA, GDPR, and SOC 2 each impose distinct obligations from Business Associate Agreements and minimum necessary access to immutable logging and mandated human review of high-impact decisions

- Shadow automation is the highest compliance risk: Gartner predicts 40% of enterprises will face security incidents from unauthorized shadow AI by 2030

- When evaluating platforms, prioritize auditability, role-based access controls, encryption, deployment flexibility, and vendor willingness to sign compliance agreements

Why Regulated Industries Face Unique AI Compliance Risks

AI automation amplifies existing compliance risks in ways traditional software does not. AI systems consume vast amounts of data, their decision-making resists easy inspection, and automated workflows move sensitive information across systems faster than manual controls can track.

The financial stakes reflect this exposure. The global average cost of a data breach reached $4.88 million in 2024, with healthcare topping the list at $10.93 million and financial services close behind at $6.08 million. Organizations using security AI and automation extensively saved an average of $2.2 million compared to those with no AI security deployment.

The Shadow Automation Problem

When employees feed Protected Health Information (PHI), financial records, or government data into unvetted large language models, they create audit gaps, access control failures, and regulatory exposure that only surface during incidents or audits. The most dangerous version of this problem emerges when teams adopt AI tools entirely outside IT governance, a pattern growing fast. Gartner predicts that by 2030, more than 40% of enterprises will experience security or compliance incidents linked to unauthorized shadow AI.

Regulators are already responding. The FTC's 2024 "Operation AI Comply" produced enforcement actions against companies that weaponized AI for deceptive practices, a clear signal that misuse carries real legal consequence, not just reputational risk.

What Separates Compliant from Non-Compliant Deployments

The distinction isn't the AI model itself, it's the governance layer around it. Compliant deployments require architectural controls built in before data processing begins:

- Defined data flow boundaries that prevent unauthorized access at ingestion

- Role-based access controls tied to regulatory requirements

- Tamper-proof audit trails that log decisions and data movement

- Human oversight enforced at critical checkpoints, not added as an afterthought

Core Compliance Frameworks for AI Workflow Automation

Regulated industries rarely operate under a single framework. An organization may need to satisfy HIPAA for patient data, SOC 2 for SaaS customer trust, and GDPR for EU user data simultaneously. Compliance is an ongoing design requirement one that shapes system architecture, data handling, and operational workflows from the ground up.

HIPAA and PHI-Specific Obligations

HIPAA's Privacy Rule and Security Rule apply directly to AI workflows that touch Protected Health Information even indirectly through model inputs, logs, or outputs. Automated workflows processing PHI require minimum necessary access, encrypted transmission, and a signed Business Associate Agreement (BAA) with every AI vendor involved.

Specific controls HIPAA mandates for AI workflow design:

- Role-based access controls (RBAC) restricting PHI access to authorized roles only

- Audit logs capturing all PHI access and modification events with timestamps and user attribution

- Risk assessments evaluating how AI systems handle ePHI and identifying vulnerabilities

- Incident response plans defining procedures when AI systems are involved in breaches or unauthorized access

HHS OCR has settled over 50 cases under its Right of Access initiative and increasingly targets noncompliance with the risk analysis provision of the HIPAA Security Rule. Organizations deploying AI without thorough risk analysis face enforcement action and substantial penalties.

GDPR and Automated Decision-Making

GDPR's "privacy by design" principle applies directly to AI workflows: organizations must document why data is processed, how long it is retained, who can access it, and where human intervention is possible. Article 22 restricts fully automated decisions that produce legal or similarly significant effects on individuals, requiring explicit consent or contractual necessity, plus suitable safeguards including the right to obtain human intervention.

GDPR applies to any AI system processing EU residents' data regardless of where the organization is based, making it a global concern for any regulated enterprise. Organizations must implement data protection by design and by default, including qualified human review capable of identifying bias introduced during model training or data ingestion.

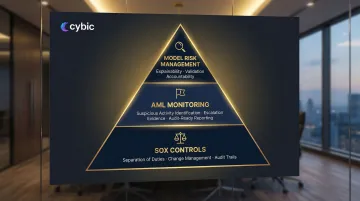

SOC 2 and Operational Controls

SOC 2 evaluations examine five trust service criteria: security, availability, processing integrity, confidentiality, and privacy. AI workflow automation must demonstrate consistent, documented controls across each criterion, with particular scrutiny on immutable logging, separation of duties, and access restriction.

When service organizations provide AI tools and analytic code, auditors examine:

- Development and maintenance practices for analytic code

- Oversight mechanisms ensuring AI engines learn appropriately

- Controls preventing unauthorized changes to models

FedRAMP and Public Sector Requirements

FedRAMP is the U.S. federal standard for cloud services used by government agencies, requiring pre-authorized cloud platforms, continuous monitoring, and stringent data handling controls. FedRAMP is prioritizing authorization of AI-based cloud services that provide conversational AI engines designed for routine federal use, provided they offer enterprise-grade features including single sign-on, SCIM provisioning, role-based access control, and guarantee data separation. Services must demonstrate that model information from training on customer data will not leave the customer environment without authorization.

What Compliant AI Automation Actually Requires: Technical Non-Negotiables

Beyond knowing which frameworks apply, compliance must be operationalized through specific technical capabilities. These are the non-negotiable features any enterprise AI workflow system must have in a regulated environment.

Audit Trails and Immutable Logging

Every AI action automated decisions, data access events, approvals, escalations, and human overrides must be logged with timestamps and user attribution. Logs must be tamper-proof to support SOC 2 controls, HIPAA audit requirements, and GDPR traceability.

NIST SP 800-53 Rev. 5 requires audit trails written to hardware-enforced, write-once media. Each record must capture what occurred, when and where, the source, the outcome, and the identity of individuals involved.

Cybic's Drava platform embeds auditability and traceability directly into its architecture no third-party logging add-ons required so compliance controls are native rather than bolted on.

Role-Based Access Control (RBAC)

Regulators consistently flag "over-permissioned" systems during audits and for good reason. Access to data, AI model outputs, and workflow controls must be scoped to user roles, not open by default. RBAC enforces minimum-necessary access principles, with documented records of who has access to what and why.

Encryption in Transit and at Rest

HIPAA's Security Rule, GDPR, and FedRAMP all treat encryption as a baseline requirement covering both storage and transmission. Industry-standard protocols must protect data during transfer between systems and while it resides in databases or storage layers. There's no compliant path that skips this step.

Human-in-the-Loop (HITL) Oversight at Defined Checkpoints

Fully autonomous AI decisions carry real compliance exposure in regulated environments. Auditable workflows must define explicit checkpoints where human review, approval, or override is required particularly for high-stakes actions involving patient data, financial transactions, or government records.

GDPR Article 22 explicitly grants individuals the right to human intervention for automated decisions with significant effects. Financial regulators take a similar position: AI decisions must be explainable and tied to accountable human review.

Deployment Flexibility and Data Residency

Organizations with data sovereignty obligations, strict regulatory boundaries, or prohibitions on training AI models with proprietary data cannot accept a single-deployment-model vendor. Compliant AI automation must support cloud, hybrid, and on-premises environments without forcing sensitive data into shared public infrastructure. Infrastructure-agnostic architectures keep control of data residency and processing in the organization's hands and not the vendor's.

Industry-Specific Compliance Priorities for AI Automation

While the technical requirements above apply universally, each regulated industry carries distinct compliance priorities that must shape how AI workflows are designed and governed.

Healthcare: Clinical Workflow Automation and PHI Governance

Healthcare AI automation covers claims processing, patient onboarding, clinical decision support, and prior authorizations each triggering specific compliance obligations:

- PHI minimization ensuring only necessary data is accessed or processed

- Business Associate Agreements with all AI vendors touching PHI

- Clear attribution of responsibility for every automated action involving patient data

- Prohibition on using PHI to train AI models without explicit authorization

Healthcare entities must conduct accurate and thorough risk analyses of ePHI handled by AI vendors. HHS OCR settled with Top of the World Ranch Treatment Center for $103,000 after finding the entity failed to conduct proper risk analysis following a phishing attack.

Financial Services: SOX, AML, and Transaction-Level Auditability

Fraud detection, loan screening, regulatory reporting, and transaction monitoring all fall under SOX controls including separation of duties, change management, and audit trails. The Federal Reserve's SR 11-7 guidance mandates rigorous model risk management, including effective challenge and validation, which applies equally to AI/ML models.

Financial institutions must ensure AI models are explainable, auditable, and governed with clear accountability, model errors or misuse carry direct regulatory and financial consequences.

Anti-Money Laundering (AML) requirements add another layer: AI systems monitoring transactions must produce audit-ready evidence demonstrating how suspicious activity was identified and escalated.

Energy and Oil & Gas: Operational AI Under Safety Regulations

In energy and oil & gas, AI workflow automation covering predictive maintenance, safety compliance monitoring, and operational reporting must comply with sector-specific regulations such as NERC CIP for critical infrastructure and applicable environmental reporting standards.

NERC CIP-013 requires responsible entities to develop documented supply chain cyber security risk management plans that include processes for procuring systems that address vendor notification of incidents and verification of software integrity. Auditability of AI-driven operational decisions isn't optional, it's the primary mechanism for demonstrating regulatory compliance when incidents occur.

Manufacturing and Public Sector: Quality Systems and Government Standards

Manufacturing AI workflows for quality control and supply chain orchestration operate under ISO 9001/AS9100 quality management frameworks, which require rigorous traceability and continuous improvement documentation. Public sector automation must comply with FedRAMP, FISMA, and government data handling standards.

Both sectors share deployment constraints that directly reflect their compliance requirements:

- Air-gapped or on-premises environments where data cannot leave controlled infrastructure

- Infrastructure-agnostic architectures that avoid vendor lock-in while meeting security mandates

- Documented traceability for every automated decision touching regulated processes

How to Evaluate an AI Automation Platform for Regulated Environments

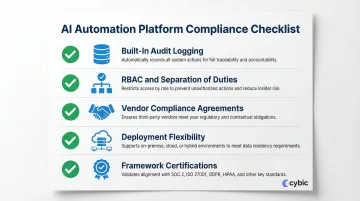

Organizations should treat AI platform selection as a compliance procurement decision, not just a technology decision. Use this checklist to evaluate any AI automation platform's compliance readiness:

Critical Evaluation Criteria:

- Built-in audit logging : Native to the platform, not a bolt-on add-on. Bolt-on logging creates gaps that auditors will find.

- RBAC and separation of duties : Role-based access enforced at the system level, without requiring external tools to fill the gaps.

- Vendor compliance agreements : Confirm the vendor will sign a BAA or equivalent data processing agreement before any data is shared.

- Deployment flexibility : Operates across cloud, hybrid, and on-premises without routing enterprise data through shared training pipelines.

- Framework certifications : Verified compliance with SOC 2 Type II, FedRAMP, HIPAA, or whichever frameworks your regulatory environment requires.

These aren't just vendor checklist items, they determine whether your AI deployment survives a regulatory audit or creates new exposure. Cybic's Drava platform is built to meet each of these criteria, with governance embedded at the architectural level from the start, not retrofitted after deployment. That means:

- Role-based access controls enforced natively

- Encrypted data protection in transit and at rest

- Full auditability of AI-driven actions and workflows

- No model training on proprietary enterprise data

The Most Common Evaluation Mistake

Organizations often choose platforms based on AI capability alone without validating governance architecture. In regulated environments, a powerful AI tool with weak access controls and opaque logging creates more compliance risk than the manual process it replaces. Evaluate governance first, AI capabilities second.

Involve Compliance Teams Early

Legal, compliance, and security teams must participate in the evaluation stage, not after deployment. They can identify regulatory requirements, assess vendor contracts for compliance gaps, and confirm the platform architecture supports audit requirements before implementation begins.

Frequently Asked Questions

What AI software is HIPAA compliant?

HIPAA-compliant AI software must include data encryption in transit and at rest, role-based access controls, audit logging of all PHI interactions, and a signed Business Associate Agreement (BAA). Compliance is determined by architectural controls, not certification status, so verify that the vendor's system is designed to satisfy HIPAA's Privacy and Security Rules before deployment.

What are the most effective AI tools for automating regulatory compliance tasks in financial services?

The most effective tools combine workflow orchestration, audit trail generation, RBAC, and explainable AI outputs that support SOX controls, AML monitoring, and regulatory reporting. The governance layer matters more than the underlying model: how decisions are logged, who can access what, and whether the system produces audit-ready evidence on demand.

What is an AI agent for GDPR compliance?

A GDPR-compliant AI agent processes personal data lawfully, with purpose limitation, data minimization, transparent decision logic, and support for individual rights like erasure and access. It must document the legal basis for processing, enforce retention policies automatically, and provide human intervention points where Article 22 requires it for automated decisions.

Which platform is best for AI automation?

For regulated industries, the platform needs governance built into its architecture: audit trails, RBAC, encryption, deployment flexibility across cloud or on-prem environments, and strict controls on how enterprise data is handled. Match the platform to the specific frameworks your organization must satisfy and confirm it can operate within your existing infrastructure without requiring a full rearchitecture.

How does audit logging work in AI workflow automation?

Audit logging in AI workflow automation captures every action AI decisions, data access, human approvals, overrides, and escalations with timestamps and user attribution stored in a tamper-proof log. These logs form the evidentiary backbone for demonstrating compliance with SOC 2, HIPAA, GDPR, and other frameworks during audits or investigations.

Can AI automation remain compliant as it scales across multiple regions or business units?

Compliant scaling is achievable when governance controls (RBAC, audit logging, data residency enforcement, and approval workflows) are built into the platform architecture rather than configured manually per deployment. Multi-region organizations need a platform that enforces jurisdiction-specific data residency rules and applies consistent compliance policies across different regulatory environments without per-instance customization.