Introduction

Healthcare organizations face mounting pressure as AI adoption accelerates faster than governance capabilities can keep pace. In 2024, 71% of hospitals reported using predictive AI integrated into their EHR, yet only 18% have a mature governance structure and fully formed AI strategy. This gap creates operational urgency: algorithmic errors can directly contribute to patient harm, HIPAA violations expose organizations to regulatory penalties, and FDA-regulated clinical decision support tools require rigorous oversight that many health systems lack the infrastructure to provide.

Closing that gap starts with understanding what a governance framework actually requires. An AI governance framework for healthcare is a structured system of policies, oversight bodies, technical controls, and processes that ensure AI tools deployed in clinical and operational settings are safe, ethical, compliant, and continuously monitored.

Healthcare frameworks carry distinct demands that generic enterprise AI governance does not. They must navigate HIPAA obligations, FDA oversight of AI/ML-based software as a medical device (SaMD), and the "black box" problem — where algorithms make recommendations no one can explain or audit, eroding trust with both patients and clinicians.

What follows is a practical breakdown of what a healthcare AI governance framework contains, how it functions across the AI lifecycle, and where organizations consistently fall short.

TLDR

- A healthcare AI governance framework spans the entire AI lifecycle—covering data, algorithms, deployment, monitoring, and accountability. It is not simply a policy document.

- Healthcare uniquely requires governance that addresses HIPAA compliance, algorithmic bias impacting vulnerable patient populations, and FDA alignment for clinical AI tools

- Effective frameworks span seven core domains—from organizational structure and data governance to bias assessment and continuous monitoring—each covered in depth below

- Implementation requires phased rollout with role-specific training, shadow deployments, and post-deployment feedback loops rather than a single launch event

- Governance effectiveness depends heavily on resource availability, organizational maturity, and whether governance is embedded in AI architecture from the start

What Is an AI Governance Framework in Healthcare — and Why Healthcare Can't Ignore It

An AI governance framework in healthcare is a formalized set of structures, policies, technical controls, and accountability mechanisms that regulate how AI tools are selected, validated, deployed, and monitored. Its scope spans clinical, administrative, and operational workflows — from diagnostic decision support and patient monitoring to prior authorization and coding.

This is not a vendor compliance checklist, a one-time ethics review, or a standalone IT policy. Healthcare-specific governance differs from general enterprise AI governance because it must address HIPAA's Privacy and Security Rules, FDA oversight of AI/ML-based SaMD, and the reality that algorithmic errors can contribute directly to patient harm.

Why Governance Is Now Operationally Urgent

AI adoption in healthcare is moving fast. According to Menlo Ventures' research, 22% of healthcare organizations have implemented domain-specific AI tools — a 7x increase over 2024 and 10x over 2023. Yet governance frameworks remain fragmented and often assume large-resource organizations, leaving community and regional health systems exposed.

The risks are not hypothetical:

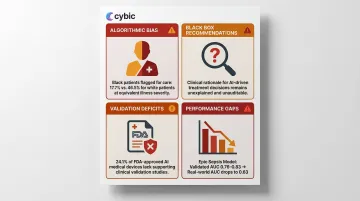

- Algorithmic bias against underrepresented populations: A seminal 2019 study demonstrated that using healthcare costs as a proxy for health needs caused an algorithm to systematically underestimate the illness of Black patients; remedying this disparity would increase the percentage of Black patients receiving additional help from 17.7% to 46.5%

- "Black box" clinical recommendations: Clinicians cannot explain or audit AI outputs, eroding trust and creating liability exposure

- Validation deficits: An analysis of 903 FDA-approved AI-enabled medical devices found that 24.1% explicitly stated no clinical performance studies were conducted

- Real-world performance gaps: The widely implemented Epic Sepsis Model predicted the onset of sepsis with an AUC of 0.63 in an external validation study, substantially worse than the performance reported by its developer (AUC 0.76-0.83)

Core Components of a Healthcare AI Governance Framework

Component 1: Governance Structure and Accountability

Every healthcare AI governance framework must begin with a formal oversight body—not necessarily a standalone team, but a designated multidisciplinary committee with clear decision rights.

Who should be included:

- Executive leadership with board-level accountability

- Clinical experts who can evaluate AI outputs against care standards

- IT and data science staff with technical expertise

- Regulatory, legal, and ethics professionals

- Cybersecurity and data privacy officers

- Patient or community representatives

Larger or more AI-mature organizations may warrant a Chief Health AI Officer role, mirroring the emergence of Chief Medical Informatics Officers. This body must have formal authority to approve, modify, or decommission AI tools—not just advisory input.

Component 2: Data Governance, Privacy, and Security

Healthcare AI governance must enforce HIPAA-aligned data controls across all AI tool lifecycles, including for de-identified datasets used in model training.

Minimum requirements:

- Encryption of data in transit and at rest

- Role-based access controls (RBAC) restricting system access by defined roles

- Regular access audits and incident response planning

- Data use agreements that restrict permitted uses, prohibit re-identification, and impose third-party vendor obligations

Platforms purpose-built for healthcare AI—like Cybic's governed AI platform—embed these controls at the architecture level rather than layering them on after deployment, which reduces compliance gaps. The result is a system where auditability and regulatory alignment are operational from day one, not patched in later.

Component 3: Bias and Risk Assessment

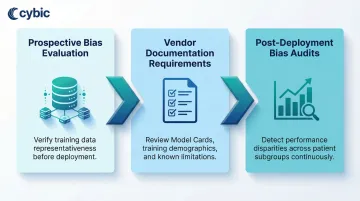

AI bias in healthcare is not hypothetical. Research documented algorithms that systematically underserved Black patients because they used healthcare cost as a proxy for health need. Governance frameworks must include:

- Prospective bias evaluation: Verifying that training datasets are representative of the populations being served

- Vendor documentation requirements: Requesting Model Cards or equivalent documentation that disclose training data demographics, known limitations, and validation results

- Post-deployment bias audits: Conducting internal audits after deployment, particularly for high-risk clinical tools, to detect performance disparities across patient subgroups

HHS OCR's Section 1557 rule prohibits algorithmic discrimination, requiring covered entities to take "reasonable steps" to identify and mitigate any potential discriminatory impacts of AI tools.

Component 4: Algorithm Evaluation and Validation

Governance frameworks must require both external vendor validation and internal local validation before deployment.

Shadow deployments are a critical risk mitigation step: running an AI tool in parallel with current workflows without acting on its outputs. This allows organizations to compare AI recommendations against clinician decisions and actual patient outcomes before full integration.

Many commercially approved AI tools are not externally validated. Of 26 regulatory-approved AI products in digital pathology, only 42% had a peer-reviewed publication describing external validation. Healthcare organizations should confirm performance in their specific patient population and clinical context before full integration.

Component 5: Ongoing Quality Monitoring

Deployment is not the finish line. AI models can degrade over time due to dataset shift—when real-world patient data diverges from training data. A study evaluating an emergency department admissions model found performance dropped during the pandemic from an AUROC of 0.856 pre-pandemic to 0.826 during COVID-19.

Monitoring requirements include:

- Regular performance reviews against predefined thresholds, with escalation triggers for model retraining

- Feedback channels between clinical staff and vendors

- Adverse event reporting processes (treated like patient safety events)

- Dashboards to track AI tool outputs over time

Component 6: Patient Transparency and Staff Education

Governance frameworks must address two distinct obligations:

Patient transparency: Disclosing to patients when AI directly influences their care decisions and how their data is used. This includes maintaining audit trails that document when and how AI outputs influenced clinical decisions.

Staff education: Training clinical and administrative staff not just on how to use specific AI tools but on foundational AI literacy—understanding limitations, recognizing potential errors, and knowing how to report issues. Governance programs should track both failure modes: under-reliance, where valid AI outputs are routinely ignored, and over-reliance, where clinicians accept AI recommendations without scrutiny. Structured reporting channels and periodic competency reviews help catch either pattern before it affects patient outcomes.

How to Implement a Healthcare AI Governance Framework

Governance is a lifecycle process, not a project milestone. It spans from initial strategy and tool selection through validation, deployment, training, and continuous monitoring—and must be revisited as tools, regulations, and patient populations evolve.

Step 1: Assess Current Governance Maturity

Before building a governance framework, organizations must honestly assess where they currently stand. Consider a tiered maturity model:

- Ad hoc/reactive: Relying entirely on vendor claims and user reports

- Developing: Basic policies documented but inconsistently enforced

- Defined: Formal governance body with documented processes

- Managed: Quantitative monitoring and feedback loops

- Optimized/leading: Real-time monitoring infrastructure and continuous improvement

Governance readiness questions:

- Do we have a named governance body with decision rights?

- Do we have documented AI policies covering selection, validation, and monitoring?

- Do we have a process for reporting AI-related adverse events?

- Can we produce a complete AI audit trail within 30 days for regulators?

Only 22% of hospitals said they are highly confident they could produce a complete AI audit trail within 30 days, with confidence lowest among small hospitals at 15%.

Step 2: Establish the Governance Body and Policies

The governance body should be formalized with defined roles and decision rights covering:

- AI tool selection criteria and risk classification

- Ethical standards for AI use

- Data use and privacy protocols

- Equitable access policies

- Escalation procedures for adverse events

Policies should align with internal standards and external regulatory frameworks. The organization's governing board should receive regular updates on AI use and outcomes, treating AI governance with the same rigor as quality and patient safety reporting.

Step 3: Implement Controls and Deploy with Governance Built In

Once policies are established, the next step is putting them into practice. Governance controls should be embedded from the start—retrofitting them after deployment is both costly and incomplete.

Pre-deployment requirements:

- Require vendors to provide documentation of bias evaluations, validation datasets, and known limitations before selection

- Execute Business Associate Agreements for HIPAA-covered data

- Apply data minimization standards

- Run shadow deployments before going live

Organizations working with AI engineering partners should confirm that governance requirements—auditability, role-based access controls, and encrypted data handling—are built into the architecture from day one, not layered on after deployment.

Cybic, for example, integrates these controls at the platform level, enabling healthcare organizations to maintain a consistent security posture across cloud, hybrid, and on-premises environments without locking into a single vendor ecosystem.

Step 4: Train Staff and Enable Feedback Loops

Deployment without role-specific training creates conditions for both under-reliance and over-reliance.

Minimum requirements:

- Define what training is required before each AI tool goes live

- Establish clear channels for staff to report AI errors or unexpected outputs

- Create mechanisms for feedback to reach both internal governance teams and external vendors

- Document training completion and maintain records for audit purposes

Step 5: Monitor, Audit, and Iterate

Post-deployment monitoring should be scaled to risk: clinical AI tools influencing diagnosis or treatment decisions require more frequent and structured review than administrative tools.

Best practices:

- Use existing quality improvement and patient safety infrastructure wherever possible to avoid creating parallel governance structures

- Predefine thresholds for when model retraining or escalation is required

- Document all monitoring actions for auditability

- Schedule regular governance committee reviews of AI tool performance

- Track adverse events and near-misses systematically

Key Factors That Affect AI Governance Effectiveness in Healthcare

Organizational Size and Resource Availability

Smaller healthcare organizations often lack in-house data science capabilities, dedicated AI ethics staff, or the infrastructure to run real-time monitoring systems. Governance frameworks must be right-sized—not one-size-fits-all.

The median share of 2026 IT and quality or safety budgets allocated to AI governance and safety is 4.2%. Large systems dedicate a median of 6.8%, compared with 2.3% among small hospitals. This resource disparity means a framework that works for a Level 5 academic medical center won't translate to a Level 1 community clinic.

For resource-limited organizations:

- Start with basic vendor documentation requirements and incident reporting

- Use existing quality and patient safety infrastructure

- Scale governance capabilities incrementally using a maturity-based approach

- Consider partnerships with AI engineering firms that embed governance controls at the architectural level

Patient Population Characteristics

The demographic composition of the patient population shapes governance priorities. A health system serving a racially diverse urban population faces different algorithmic bias risks than one serving a more homogenous rural population.

Governance frameworks should explicitly address equity by requiring that AI tools be tested and validated on representative subgroups, not just aggregate performance metrics.

This isn't theoretical risk. Studies show that models predicting ICU mortality, 30-day psychiatric readmission, and asthma exacerbation performed worse in populations with lower socioeconomic status — a gap that aggregate metrics alone won't surface.

Regulatory Environment and Tool Classification

Population characteristics determine governance priorities internally — but regulatory classification determines the external compliance bar. AI tools regulated by the FDA as SaMD require more rigorous oversight than administrative automation tools.

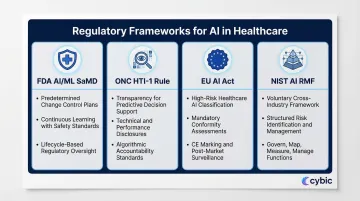

Key regulatory frameworks:

- FDA AI/ML SaMD: Finalized guidance on Predetermined Change Control Plans (PCCP) enables continuous learning while maintaining safety standards

- ONC HTI-1 rule: Requires transparency for predictive decision support interventions, including technical and performance disclosures

- EU AI Act: Classifies certain healthcare AI as high-risk, triggering mandatory conformity assessments before deployment

- NIST AI RMF: A voluntary framework applicable across industries, offering structured guidance for AI risk identification and management

Common Mistakes and Misconceptions in Healthcare AI Governance

Treating Governance as a One-Time Procurement Checkpoint

Many organizations conduct a governance review at the point of AI tool selection, then consider the process complete. AI systems don't stay static — models drift, data inputs change, tools get updated, and patient populations shift.

Just 29% of hospitals reported having implemented and enforced policies covering AI model inventory, lineage and sign-offs. Nearly half, 48%, are still drafting such policies. Governance is a continuous lifecycle responsibility, not a gate that a tool passes through once.

Equating Vendor Validation with Local Validation

A vendor's published validation data, FDA clearance, or third-party certification does not guarantee that an AI tool will perform well in a specific organization's patient population or clinical workflow context.

The Epic Sepsis Model achieved an AUC of 0.63 in external validation, substantially worse than the 0.76-0.83 reported by its developer. A model trained on younger, urban populations can fail quietly when deployed in an older rural community.

Local validation against the actual deployment context is a baseline governance requirement — not an optional step.

Assuming Governance Is an IT or Compliance Responsibility Alone

The same logic applies to ownership: governance fails when it's siloed in IT or legal teams rather than distributed across the people who understand what's actually at stake. Effective governance requires:

- Clinical experts who can evaluate whether AI outputs align with care standards

- Operational leaders who can assess workflow integration risks

- Patient advocates who can flag equity concerns

- Executive sponsors who maintain board-level accountability

Governance without clinical and operational co-ownership produces policies that look good on paper but aren't followed in practice.

Frequently Asked Questions

What is an AI governance framework in healthcare?

An AI governance framework in healthcare is a structured system of oversight bodies, policies, and technical controls governing how AI tools are selected, validated, deployed, and monitored in clinical and administrative settings. It differs from generic IT policy by addressing healthcare-specific regulatory obligations (HIPAA, FDA), patient safety risks, and algorithmic bias concerns.

Why is AI governance especially critical in healthcare compared to other industries?

Healthcare AI directly impacts patient safety and operates under strict HIPAA and FDA obligations. Healthcare AI directly impacts patient safety and operates under strict HIPAA and FDA obligations. It also carries heightened algorithmic bias risks across vulnerable patient populations. When AI influences clinical decisions without adequate transparency or validation, it erodes trust and creates liability exposure that most other industries simply don't face.

Who should be on a healthcare AI governance committee?

Core roles include executive leadership, clinical experts, IT and data science staff, legal and compliance professionals, cybersecurity officers, and patient representatives. The committee must hold formal decision authority — not just an advisory role.

What regulations govern AI in healthcare organizations?

Core regulations include HIPAA Privacy and Security Rules, FDA oversight of AI/ML-based Software as a Medical Device (SaMD), and HHS OCR Section 1557 prohibiting algorithmic discrimination. Emerging guidance from the EU AI Act, NIST AI RMF, and Joint Commission/CHAI RUAIH frameworks adds further compliance considerations.

How often should AI tools be monitored after deployment in healthcare?

Monitoring frequency should be risk-stratified: clinical AI tools influencing patient care decisions require more frequent review (often quarterly or continuous) than administrative tools. Organizations should predefine performance thresholds that trigger escalation, retraining, or decommissioning.

Can small or resource-limited healthcare organizations implement meaningful AI governance?

Yes. Start with existing quality and patient safety infrastructure, add basic vendor documentation requirements and incident reporting, then scale incrementally using a maturity-based approach. Partnering with AI engineering firms that embed governance controls at the architectural level helps resource-limited organizations avoid building duplicate oversight structures from scratch.