Introduction

Financial institutions face pressure from every direction: more data to process, faster risk decisions to make, more demanding customers to serve, and compliance frameworks that grow more complex each year.

Traditional automation and rule-based AI tools built to respond only to predefined triggers that weren't designed for this environment.

AI agents change the calculus entirely. Unlike rule-based systems or generative AI that waits for human prompts, AI agents in finance can perceive real-time data, reason across systems, plan multi-step actions, and execute decisions autonomously. Gartner predicts that 15% of daily work decisions will be autonomously made by agentic AI by 2028, up from 0% in 2024, a scale of transformation that will reshape how financial services operate.

Understanding where that transformation is already happening and where it's headed is what this article is built around. It covers the top use cases, real-world examples with measurable impact, key benefits, governance considerations, and what to evaluate when selecting an AI agent solution for your institution.

TL;DR

- AI agents operate with goal-directed autonomy, planning and adapting across workflows with minimal human intervention

- High-value use cases include fraud detection, compliance monitoring, financial reporting, customer engagement, and algorithmic trading

- Deployed AI agents deliver measurable gains in efficiency, accuracy, and risk coverage

- Effective implementation starts with internal, lower-risk workflows before scaling to customer-facing processes

- Platform selection hinges on governance architecture, infrastructure flexibility, and fit for regulated environments

AI Agents in Financial Services: An Overview

AI agents in financial services are intelligent systems that combine large language models (LLMs), machine learning, and workflow orchestration to perceive real-time data, make context-sensitive decisions, and take action. They differ from chatbots, RPA bots, and static generative AI tools that rely on human prompting for every step.

What makes AI agents distinct:

- Perception: Continuously monitor transaction streams, market signals, and regulatory feeds

- Reasoning: Analyze context across multiple data sources to assess risk or identify patterns

- Planning: Break complex goals into multi-step workflows

- Execution: Take autonomous actions within governed parameters

- Adaptation: Learn from outcomes and adjust approaches over time

Financial services is a particularly high-stakes environment for agentic AI. The industry handles sensitive data, operates under strict regulations (KYC, AML, Basel, SEC requirements), and faces constant pressure from fraud, market volatility, and customer expectations. That pressure is driving rapid adoption that financial institutions aren't waiting to see where agentic AI lands. They're already deploying it in production. The use cases below reflect where measurable value is being generated right now.

Top Use Cases for AI Agents in Financial Services

These use cases reflect documented enterprise deployments with measurable operational impact across banking, insurance, asset management, and fintech. Each represents a workflow where AI agents outperform rule-based automation or human-only processes and where the evidence is specific enough to act on.

Fraud Detection and Real-Time Risk Monitoring

AI agents continuously scan transaction streams, account behavior, and external signals in real time, making dynamic risk assessments rather than applying fixed rules. This enables institutions to flag suspicious activity like sudden large transfers from dormant accounts, unusual cross-border patterns and trigger automated responses before losses occur.

Documented impact:

IBM's deployment at Askari Bank reduced daily security incidents from approximately 700 to fewer than 20, while slashing remediation times from 30 minutes to just 5 minutes. A top 10 US bank prevented $47 million in fraud using AI-driven detection, achieving 94% accuracy and reducing false positives by 73%.

Traditional rule-based systems generate overwhelming false positives that exhaust fraud teams. AI agents filter noise, prioritize genuine threats, and run response playbooks without manual intervention, freeing analysts to focus on complex investigations rather than routine triage.

Regulatory Compliance Monitoring

AI agents continuously review transactions, contracts, and client communications against evolving regulatory requirements like checking for KYC completeness, AML triggers, fair-lending compliance, and disclosure accuracy. This replaces periodic manual audits with always-on, documented oversight.

The compliance burden is massive. LexisNexis reports the true cost of financial crime compliance has reached $85 billion in EMEA and $61 billion in the US and Canada, with labor costs as a primary driver.

AI agents can ingest regulatory update feeds, identify changes relevant to the institution, and surface recommended policy adjustments reducing the compliance team's burden on scanning and interpretation work. Thomson Reuters reports that 62% of compliance respondents spend between 1 and 7 hours per week tracking regulatory developments, with Global Systemically Important Banks spending 8-10 hours weekly on these tasks.

Financial Reporting and Audit Support

AI agents connect to ERP platforms, billing systems, and external data feeds via APIs to continuously gather and validate financial data. They catch journal entry mismatches, revenue recognition errors, or missing disclosures as they occur rather than at month-end close, cutting reporting cycles significantly.

Ventana Research benchmarks the average close at 6.4 business days for public companies. IBM's internal Jobotx initiative projects a greater than 90% reduction in cycle time for journal processing with an estimated $600,000 in annual savings.

Governance requirement: As AI agents participate in audit workflows, explainability and audit trail generation are non-negotiable. Agents must log every action, flag decision rationale, and support human review for regulated outputs because speed without traceability creates regulatory exposure, not efficiency.

Customer Service and Hyper-Personalized Engagement

AI agents in customer-facing financial services go beyond chatbots. They coordinate across core banking systems, transaction histories, and product databases to deliver personalized guidance. A practical example: proactively suggesting a savings reallocation to a customer whose spending pattern shows consistent month-end shortfalls.

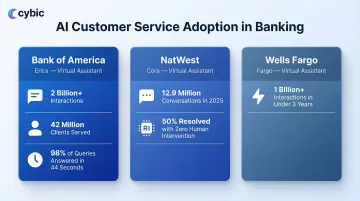

Scale of adoption:

- Bank of America's Erica has surpassed 2 billion interactions, serving 42 million clients with 98% getting answers within 44 seconds

- NatWest's Cora handled 12.9 million conversations in 2025, with 50% requiring no human intervention

- Wells Fargo's Fargo supported customers through more than 1 billion interactions in less than three years

The dual value: operational (deflecting routine inquiries, accelerating account management tasks like dispute resolution or loan status updates) and strategic (democratizing financial advice previously reserved for high-net-worth clients).

Algorithmic Trading and Portfolio Optimization

AI agents in trading environments monitor market signals, news events, and portfolio exposure in real time. They can execute pre-approved rebalancing actions or adjust risk parameters faster than any human trader responding to a geopolitical event that shifts commodity prices by rebalancing energy exposure across a portfolio within seconds.

JPMorgan's LOXM trading system uses reinforcement learning to optimize trade timing, reducing market impact costs by 20% while processing over 1 million trade decisions daily with mandatory trader oversight.

Governance in practice: Enterprise deployments in regulated institutions enforce human-in-the-loop checkpoints for actions above defined thresholds. AI agents operate within approved parameters with full decision logging for auditability. The cost of getting this wrong is concrete: the SEC charged Two Sigma Investments $90 million after an employee made unauthorized changes to investment models that went unaddressed by management.

Key Benefits of AI Agents for Financial Services

AI agents deliver concrete operational benefits: increased throughput (handling data volumes impossible for human teams), reduced cycle times for reporting and compliance reviews, and earlier error detection. McKinsey estimates that agentic AI could lower operational costs by 20% or more, equivalent to 9% to 15% of operating profits.

Measurable gains include:

- PwC analysis indicates institutions that fully embrace AI could drive up to a 15-percentage-point improvement in their efficiency ratio

- AI-driven AML systems can reduce false positives by 70% while improving detection of high-risk events by 30%

- The Association of Certified Fraud Examiners estimates organizations lose 5% of revenue annually to fraud, AI agents directly reduce this exposure

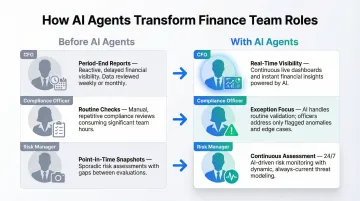

Beyond the numbers, AI agents shift finance teams away from reactive, process-heavy work toward analytical and judgment-intensive roles:

- CFOs gain real-time visibility rather than waiting for period-end reports

- Compliance officers focus on exceptions, not routine checks

- Risk managers move from point-in-time snapshots to continuous assessment

Financial inclusion is a concrete upside that often gets overlooked in ROI discussions. AI agents make cost-per-interaction economics viable at scale, extending credit assessment, financial planning, and banking support to underserved customer segments opening new markets while advancing social impact goals.

Governance and Challenges of AI Agents in Finance

The core governance challenges unique to financial services AI agents are significant and must be addressed architecturally, not as afterthoughts.

Explainability: Regulators and auditors require transparent reasoning behind credit decisions, compliance flags, and risk assessments. The CFPB's Circular 2022-03 explicitly requires creditors using complex algorithms to provide specific, accurate reasons for adverse actions. Black-box outputs don't meet that bar.

Bias risk: Agents trained on historical financial data may replicate past inequities in lending or credit scoring. Financial institutions must implement bias detection and mitigation mechanisms before deploying agents in customer-facing decisions.

Accountability gaps: When an agent takes an action that causes harm, ownership must be assigned in advance. Governance frameworks need to specify who owns agent behavior and what the escalation path looks like when something goes wrong.

Legacy integration challenge: Many financial institutions run core systems that were not designed for real-time data exchange with AI agents. According to a Deloitte survey, nearly 60% of AI leaders cite integrating with legacy systems and addressing risk and compliance concerns as their primary challenges. Successful agentic AI deployments require investment in API layers, data quality pipelines, and orchestration infrastructure before agents can operate reliably.

Human-in-the-loop imperative: Even in high-automation environments, financial institutions must define which agent actions trigger mandatory human review. That typically includes:

- Credit decisions above defined thresholds

- Compliance flags with direct regulatory exposure

- Customer-facing communications on sensitive matters

These checkpoints belong in the architecture from the start, not bolted on later.

Gartner warns that over 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, or inadequate risk controls. The institutions that avoid that outcome share one trait: they treat governance as an engineering problem, not a compliance checkbox.

What to Look for When Choosing an AI Agent Platform for Finance

Financial institutions should apply rigorous evaluation criteria when selecting an AI agent platform.

Governance Architecture

Security, RBAC, audit logging, and regulatory alignment should be built into the platform at the infrastructure level and not added after the fact. Look for:

- Role-based access controls for secure system access

- Encrypted data protection in transit and at rest

- Auditability and traceability of AI-driven actions and workflows

- Strict data governance, including guarantees that proprietary financial data will not be used to train external models

Infrastructure Flexibility

The platform needs to operate across cloud, hybrid, and on-premises environments without locking the institution into a rigid ecosystem. Financial institutions face complex infrastructure constraints driven by regulation, security requirements, and legacy systems a rigid deployment model creates risk, not value.

These platform criteria only matter if the vendor can actually deliver against them. Institutions should look for engineering-led partners who build and integrate systems into existing workflows and not firms that hand over prototypes. Demonstrated experience with regulated environments, where compliance and audit trails are operational requirements, is the differentiator that matters most.

Cybic's Drava platform is built for exactly this context. It connects enterprise data, machine learning, AI reasoning, and intelligent agents within a governed automation framework.

Governance, security controls, and regulatory alignment are embedded at the architectural level, not configured on top. Drava supports cloud, hybrid, and on-premises deployments while maintaining strict data protection and full audit trail requirements.

Where to start: Before committing to a platform, run a structured pilot on two or three lower-risk internal workflows. Good candidates include:

- Reconciliation processing

- Disclosure document preparation

- Compliance document review

Use those pilots to stress-test integration capability, governance controls, and output quality before scaling to customer-facing or regulatory-critical processes.

Conclusion

AI agents are reshaping financial services operations now and not in some future roadmap. The institutions gaining competitive advantage today are those treating governance, integration, and human-AI collaboration as first-order engineering requirements, not secondary concerns.

That governance imperative is backed by regulatory pressure from every direction. The SEC's 2025 Examination Priorities focus heavily on AI washing and the supervision of AI in trading and AML. Meanwhile, the EU AI Act's transparency requirements for high-risk systems including credit scoring that will force global institutions to overhaul model risk management frameworks.

The regulatory environment is tightening, not loosening.

If you're evaluating AI agent solutions for financial operations, explore how Cybic designs and deploys governed AI automation systems built for regulated enterprise environments. Our engineering-led approach prioritizes integration, governance, and operational reality at the architectural level before a line of production code is written.

Frequently Asked Questions

How are AI agents used in financial services?

AI agents are deployed across fraud detection, compliance monitoring, financial reporting, customer service, and trading operating autonomously to process real-time data, execute actions, and coordinate across systems with minimal human oversight compared to traditional automation.

What are the best AI tools for financial services?

The right tools depend on use case and governance requirements. Common categories include agentic orchestration platforms, LLM-based compliance assistants, and real-time fraud detection systems. Enterprise platforms are evaluated on their ability to integrate with existing infrastructure, maintain audit trails, enforce access controls, and support regulated workflows and not on feature count alone.

What are the trends for AI agents in 2025?

In 2025, financial institutions are moving from single-agent deployments to multi-agent orchestration. Regulatory scrutiny is accelerating demand for explainable and auditable AI, and organizations are shifting from internal pilots to customer-facing and compliance-critical production deployments.

What is the difference between AI agents and traditional RPA or automation in financial services?

Traditional RPA follows fixed, rule-based scripts and breaks when conditions change, while AI agents can reason about context, adapt to new data, make multi-step decisions, and handle exceptions making them suitable for complex, judgment-intensive financial workflows that RPA cannot handle.

What are the biggest governance challenges of deploying AI agents in finance?

The primary challenges are explainability, bias in training data, and data privacy risks. Regulators require transparent reasoning, and high-stakes actions need clearly defined human-in-the-loop checkpoints. These constraints must be addressed at the architectural level before deployment.