Introduction

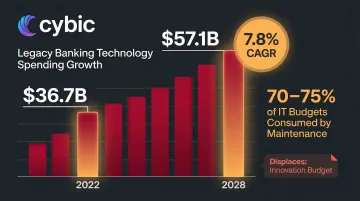

Financial institutions face a critical infrastructure challenge: many still operate on mainframe-era databases built in the 1980s-2000s that were never designed for today's data volumes, API connectivity, or real-time analytics demands. Banks currently spend 70-75% of their IT budgets merely maintaining legacy systems, with the cost of supporting outdated payments technology alone projected to reach $57.1 billion annually by 2028. Meanwhile, regulators are no longer just auditing reports, they're penalizing the underlying data architecture itself, as demonstrated by the OCC's $75 million fine against Citibank specifically citing persistent deficiencies in data governance and risk reporting infrastructure.

This guide is written for CIOs, Chief Data Officers, IT architects, and technology leaders at banks, insurers, investment firms, and credit unions managing aging data systems. Modernizing financial databases carries higher stakes than most industries like regulatory penalties, operational continuity requirements, and data volumes leave little margin for error.

It covers:

- What database modernization means for financial institutions

- Why the urgency is growing now

- How the migration process works in practice

- Which technologies matter and which factors determine success

TL;DR

- Database modernization replaces legacy databases to support cloud infrastructure, AI capabilities, real-time analytics, and compliance requirements

- Financial institutions face mounting pressure from regulatory bodies, fintech competition, and customer expectations that batch-processing systems cannot meet

- Execution follows three phases like assessment, migration, and operationalization each building on the last rather than replacing systems in a single cutover

- Key technologies include cloud data warehouses, microservices-friendly databases, streaming platforms, and AI-ready data pipelines

- Outcomes depend on governance design and data quality remediation both must be addressed before migration, not after

What Is Database Modernization?

Database modernization is the process of replacing a financial institution's legacy data architecture with systems built for today's demands: cloud-native infrastructure, real-time processing, and modern access controls and not just a software version upgrade.

The goal is a unified data foundation that supports real-time decision-making, regulatory reporting, fraud detection, and AI-driven services across the institution.

How it differs from related concepts:

- Database migration moves data from one system to another, a single step within a broader modernization effort

- Data center modernization covers infrastructure transformation: servers, networking, and physical storage

- Database modernization targets the data management layer specifically: schemas, query engines, access controls, and integration architecture

Why Database Modernization Is Critical for Financial Services

The Legacy System Trap

Financial institutions are uniquely constrained by decades-old infrastructure. Over 43% of global banking systems still use COBOL, a programming language developed in the late 1950s. Many operate on mainframe-era databases and on-premise relational systems built long before today's demands existed: sub-second transaction processing at scale, continuous regulatory reporting, granular audit trails, and clean real-time data pipelines for AI/ML models.

Global spending on outdated banking technologies is projected to rise from $36.7 billion in 2022 to $57.1 billion by 2028, with maintenance costs growing at 7.8% annually. This massive capital drain leaves only a fraction of budgets available for innovation.

What Goes Wrong Without Modernization

Legacy databases create severe operational and strategic risks:

- Data silos prevent unified customer views across channels

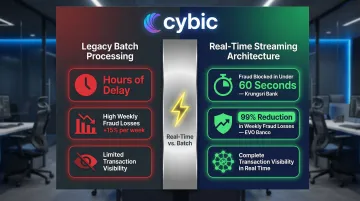

- Batch-processing cycles that stretch beyond 8 hours delay risk reporting and leave institutions exposed to fraud between transaction and analysis

- Compliance gaps due to poor data lineage and manual reporting processes

- High maintenance costs that consume IT budgets at the expense of innovation

The Competitive Pressure

These internal costs compound an external threat. Fintech entrants and challenger banks are built on modern, cloud-native data infrastructure from day one, which lets them launch products, respond to market changes, and serve customers faster than incumbents constrained by legacy systems.

The Regulatory Dimension

Regulators are increasingly demanding more granular, traceable, and frequently updated data submissions. The EU's DORA (Digital Operational Resilience Act) requires 4-hour initial incident reporting, while the SEC's Consolidated Audit Trail (CAT) system requires millisecond-level timestamps. Neither mandate is achievable with legacy 8-hour nightly batch processing.

The OCC's $75 million penalty against Citibank explicitly noted "certain persistent weaknesses remain, in particular with regard to data" and the bank's "lack of processes to monitor the impact of data quality concerns on regulatory reporting." This signals a shift from auditing final reports to penalizing the data architecture that produces them.

How Database Modernization Works in Financial Services

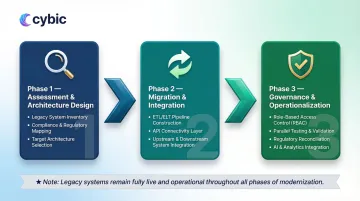

Database modernization is a phased transformation and not a single event that moves from legacy assessment through architecture design to migration, integration, and operationalization, with governance woven throughout.

Financial institutions typically adopt a "progressive modernization" approach rather than a full cutover. Mission-critical legacy systems stay live during transition while workloads migrate incrementally, this reduces operational risk without forcing a high-stakes cutover moment.

Phase 1: Assessment and Architecture Design

Before any migration begins, teams build a complete picture of the existing data environment. That means inventorying all data assets, mapping interdependencies, and deciding which databases move to cloud, stay on-premises in a hybrid setup, or get decommissioned entirely.

Core activities at this stage:

- Full inventory of existing databases (structured, unstructured, transactional, analytical)

- Data quality assessment and lineage mapping

- Compliance mapping to identify data sovereignty, retention, and access control requirements

- Stakeholder alignment across IT, compliance, risk, and business teams

- Selection of target architecture (cloud providers, data warehouse platforms, governance frameworks)

Phase 2: Migration, Integration, and Infrastructure Build

This is where data and schemas physically move to new systems. ETL/ELT pipelines handle historical migration while legacy systems continue feeding live data in parallel keeping operations intact throughout the transition.

What this looks like in practice:

Schema transformation and historical data migration via ETL/ELT pipelines, run alongside live legacy feeds

API layer construction and microservices integration

Connection of modernized databases to upstream applications (core banking, CRM, risk systems)

Integration with downstream consumers (reporting tools, AI models, customer-facing apps)

Phase 3: Governance, Testing, and Operationalization

With the new environment built, the focus shifts to proving it works and proving it's compliant. Teams stand up the governance framework (RBAC, audit trails, lineage tracking, quality monitoring), run parallel validation against the legacy environment, and only decommission old systems once reconciliation confirms integrity.

At this stage, teams focus on:

- Implementation of governance frameworks with RBAC and audit trails

- Parallel-run testing to validate data integrity

- Regulatory reporting reconciliation

- Performance benchmarking

- AI/analytics integration (connecting modernized databases to machine learning pipelines and business intelligence layers)

- Ongoing monitoring and optimization

Key Technologies Driving Database Modernization in Financial Services

Cloud Data Warehouses and Lakehouses

Platforms like Snowflake, Databricks, AWS Redshift, and Azure Synapse are replacing on-premise data warehouses by enabling elastic scaling, separation of storage and compute, and support for both structured and unstructured financial data.

Real-world impact: FIS achieved 33% cost savings, 7x faster data loading, and processes compliance data up to 20x faster after migrating to Snowflake. These platforms are designed specifically for the analytical and AI workloads financial institutions now depend on.

Microservices and API-First Database Architecture

Breaking monolithic database dependencies into API-accessible, purpose-fit data services (using both relational and NoSQL databases depending on use case) enables financial institutions to modernize incrementally decoupling applications from underlying data stores and enabling faster product development without full system replacement.

AI and Intelligent Data Pipelines

Modern database architecture must be designed to feed AI and ML systems, including real-time streaming data pipelines (Apache Kafka, cloud-native equivalents), feature stores for ML models, and integration with AI reasoning layers.

Fraud detection transformation: Krungsri Bank implemented real-time data streaming to detect and block fraudulent transactions in under 60 seconds, while EVO Banco reduced weekly fraud losses by 99% after adopting Confluent Cloud for real-time transaction processing.

These results depend on a modernized data foundation. Without it, fraud detection models, credit scoring systems, and AI-powered customer analytics cannot operate at the speed or scale financial institutions require.

That foundation is also what makes an intelligence layer viable. Cybic's Drava platform, for example, connects enterprise data, machine learning, and intelligent agents into unified workflows that sit on top of a modernized data infrastructure turning raw financial data into operational decisions.

Governance and Security Infrastructure

Modern financial database architecture must include embedded governance from the start, not added as an afterthought. This means designing governance directly into the architecture across every layer:

- Data classification to tag and manage sensitive financial records at ingestion

- Encryption at rest and in transit across all data stores

- Role-based access controls (RBAC) limiting data exposure by role and function

- Immutable audit logs that satisfy regulatory examination requirements

- Regulatory compliance frameworks aligned to applicable standards (SOX, GDPR, BCBS 239)

This principle governance by design is what separates a true modernization from a lift-and-shift cloud migration.

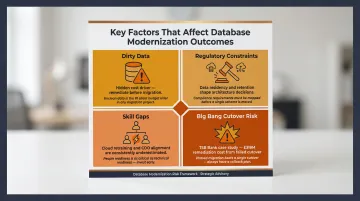

Key Factors That Affect Database Modernization in Financial Services

Dirty data is the hidden cost driver. Inconsistent formats, missing lineage, and duplicate records consistently push timelines and budgets beyond initial estimates. Institutions that remediate data quality before migration outperform those that try to fix it afterward.

Regulatory constraints shape architecture before code is written. Data residency laws, retention mandates, and audit requirements vary by jurisdiction and institution type and they determine which workloads can move to public cloud versus what must stay on-premise or in private cloud. Map these constraints before finalizing architecture decisions, not after.

Skill gaps derail more projects than technology does. Financial institutions routinely underestimate the organizational lift: retraining engineers on cloud-native platforms, governing new data access models across business units, and aligning CDO/CIO mandates with line-of-business priorities. These gaps need to be planned for, not discovered mid-migration.

"Big bang" cutover carries real, documented risk. The 2018 TSB Bank migration illustrates this clearly: a full-system cutover resulted in £318 million in direct costs and £48.65 million in regulatory fines. Phased migration with parallel system operation is the lower-risk alternative though it demands tighter program management to avoid prolonged dual-system maintenance.

Each of these factors interacts with the others. A compliance constraint can force a phased approach; a skill gap can extend a parallel-run period; unresolved data quality issues can trigger regulatory exposure mid-migration. Addressing them in sequence and not in isolation is what separates successful modernization programs from stalled ones.

Conclusion

Database modernization achieves a scalable, governed, AI-ready data foundation that enables real-time operations, regulatory compliance, and competitive product development replacing the fragmented, high-maintenance legacy systems that constrain growth.

Success here depends less on technology selection and more on how you execute. That means embedding governance at the architectural level, phasing migration to manage risk, and keeping regulatory and business strategy aligned at every decision point. Treat this as a strategic program and not a one-time infrastructure project.

The pressure to act is real. Regulators now scrutinize data architecture directly, not just outputs. Fintech competitors built on modern infrastructure from day one carry structural advantages that compound over time. Three factors separate programs that deliver from those that stall:

- Governance by design : built into the architecture, not bolted on after deployment

- Phased execution : sequenced to reduce risk while delivering incremental business value

- Regulatory alignment : technical decisions mapped to compliance requirements before implementation begins

Frequently Asked Questions

What is database modernization?

Database modernization is the process of updating or replacing outdated database systems with modern architectures. It covers cloud migration, schema redesign, and integration of real-time and AI-capable data infrastructure to meet current performance, scalability, and compliance demands.

What is data center modernization?

Data center modernization refers to the broader transformation of physical and virtual IT infrastructure (servers, networking, storage, virtualization), whereas database modernization is specifically about the data management layer. The two often occur together but are distinct initiatives with different scopes.

Which database is best for financial data?

Financial institutions typically use a combination of database types rather than a single solution:

- Relational databases (PostgreSQL, Oracle) for transactional workloads

- Cloud data warehouses (Snowflake, Redshift) for analytics

- NoSQL databases for unstructured or high-velocity data

The right choice depends on your use case, regulatory requirements, and target architecture.

What is the new technology for financial services?

Key technologies reshaping financial services data infrastructure include:

- Cloud-native data platforms

- AI/ML pipelines

- Real-time streaming systems (Apache Kafka)

- Open banking APIs

- Governed data lakehouses

Each of these requires a modernized database foundation to function effectively.

Why is data important in financial services?

Data drives every core function in financial services: credit decisions, fraud detection, regulatory reporting, customer personalization, and risk management. The speed, accuracy, and accessibility of that data directly determines a financial institution's competitive performance and compliance standing.

What are the 5 C's of data governance?

The 5 C's of data governance are:

- Consistency : uniform data definitions across systems

- Completeness : no missing or partial records

- Currency : data is up to date

- Conformity : data meets defined standards and formats

- Confidence : data can be trusted for decisions and reporting

These principles are especially critical in financial services, where data quality directly affects regulatory reporting accuracy, AI model reliability, and audit defensibility.