Custom AI development represents a shift from adapting your business to fit generic tooling, to engineering AI systems around how your organization actually operates. Rather than forcing workflows into pre-built templates, custom AI integrates directly into existing infrastructure, embeds governance by design, and produces outcomes tailored to specific industry requirements.

The transformation is measurable. Custom-built AI delivers operational improvements by connecting to the systems you already use such as ERPs, CRMs, legacy databases and learning from your proprietary data without exposing it to external model training. This produces accuracy, relevance, and reliability that generic platforms structurally cannot match.

TLDR

- Custom AI integrates directly with legacy systems, ERPs, and proprietary data environments without requiring infrastructure replacement

- Governance, security controls, and auditability are embedded at the architectural level and not retrofitted after deployment

- Custom AI drives measurable outcomes across sales automation, predictive analytics, document processing, and industry-specific use cases like predictive maintenance

- The decision to build custom AI is driven by operational complexity, compliance requirements, and long-term competitive advantage

- Organizations see growing ROI as models continuously improve on their own operational data

Why Off-the-Shelf AI Falls Short for Complex B2B Environments

Generic AI platforms are trained on broad datasets and built for horizontal use cases. They force businesses to adapt workflows to the tool rather than engineering solutions around operational reality.

A generic chatbot might answer basic questions. A purpose-built AI reasoning system can route approvals, validate compliance rules, and integrate with proprietary knowledge bases. Three structural gaps explain why that distinction matters.

The Data Isolation Problem

Off-the-shelf AI tools typically cannot access or learn from proprietary enterprise data without significant risk. Internal contracts, sensor data, operational records, and clinical notes remain isolated from the AI limiting accuracy and relevance in high-stakes B2B environments.

Samsung banned ChatGPT globally after staff uploaded sensitive code to the platform. Apple restricted ChatGPT and GitHub Copilot for similar concerns about confidential data exposure. These incidents highlight the structural risk of feeding proprietary information into third-party models.

Compliance and Governance Gaps

Generic platforms lack industry-specific compliance controls needed in healthcare, energy, and public sector environments. When compliance is added after deployment, it creates audit trail vulnerabilities and regulatory exposure.

Gartner predicts over 40% of agentic AI projects will be canceled by 2027 due to unclear business value and inadequate risk controls. IDC reports that 88% of AI proofs of concept fail to reach production, largely due to insufficient AI-ready data and unclear ROI.

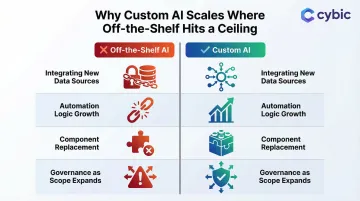

The Scalability Ceiling

Off-the-shelf solutions cap at pre-set features and can't adapt as workflows, data sources, or compliance requirements change. Custom solutions are designed to expand alongside the business. That means:

- Integrating new data sources without rebuilding the core system

- Adding automation logic as operational complexity grows

- Replacing individual components rather than the entire platform

- Maintaining governance controls as scope expands across teams

How Custom AI Development Transforms Core B2B Operations

Intelligent Workflow Automation

End-to-end automation of complex, multi-step workflows: conditional logic, role-based routing, exception handling goes well beyond what rule-based tools can handle. Contract review, procurement approvals, and compliance monitoring pipelines run autonomously without manual handoffs.

AI-driven contract analysis reduces review time by 56.25% on average across various contract types. In one implementation, contract review time dropped 80% to just 30 minutes per document using AI contract management.

Predictive Analytics and Decision Support

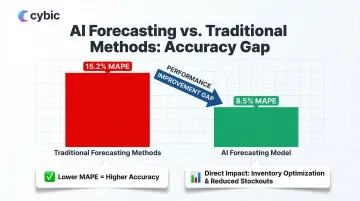

AI models trained on a company's own historical data consistently outperform generic forecasting tools. For demand planning, sales pipeline projection, or equipment failure prediction, these models improve continuously as they ingest new operational data.

A retail AI forecasting model achieved 8.5% Mean Absolute Percentage Error, significantly outperforming traditional methods at 15.2% MAPE. This accuracy gap translates directly to inventory optimization and reduced stockout costs.

AI-Powered Document and Data Processing

NLP and OCR-based AI extract, classify, and act on structured data from unstructured documents such as invoices, technical reports, compliance filings and automatically populate downstream systems like ERPs and CRMs. The problem this solves is significant:

- 92.9% of all data generated in 2023 was unstructured, creating bottlenecks manual teams can't clear

- Manual data entry carries an inherent error rate of ~1%, which compounds to 18-40% of records containing errors across large datasets

- Poor data quality costs organizations an average of $12.9 million per year

Generative AI Copilots for Enterprise Teams

Enterprise LLM applications built on a company's own content and knowledge base support team decision-making, surface relevant information from internal repositories, and accelerate complex tasks like report generation and RFP responses.

The critical distinction: these copilots are trained on proprietary data but never expose it to external model training. Cybic builds enterprise LLM applications with data governance embedded at the architecture level, no training on proprietary enterprise data, and confidential information stays protected by design.

Operational Visibility and Real-Time Intelligence

Custom AI systems integrate with operational data streams such as IoT sensors, ERP outputs, field reporting systems to give leadership real-time visibility into performance, anomalies, and emerging risks. For industries like manufacturing and energy, where an undetected anomaly can halt production or trigger compliance failures, that visibility is the difference between a managed exception and an unplanned shutdown.

Industry-Specific Wins: Custom AI in Action

Manufacturing: Predictive Maintenance and Production Optimization

Custom AI models trained on equipment sensor data and production line metrics predict failures 24–48 hours in advance, reduce unplanned downtime, and optimize production scheduling.

AI-driven predictive maintenance reduces maintenance costs by 18–25% and cuts unplanned downtime by up to 50%. Decision tree models achieved 98.24% accuracy with prediction speeds over 421,000 observations per second in predictive maintenance applications.

Healthcare: Clinical Workflow Enhancement and Data Governance

Custom AI improves clinical workflows by surfacing relevant patient history, flagging documentation gaps, and automating administrative triage. It does this while maintaining HIPAA alignment, role-based access controls, and strict data isolation, no proprietary data exposed to external model training.

The administrative burden on clinicians is measurable:

- Physicians spend approximately two hours on EHR and administrative tasks for every hour with patients

- Virtual scribes reduce total EHR time by an average of 5.6 minutes per appointment

- Ambient AI scribes reduce clinician burnout from 51.9% to 38.8% after 30 days of use

In healthcare, the regulatory and patient safety stakes make purpose-built, auditable AI the only viable path and not a general-purpose model retrofitted to clinical needs.

Oil & Gas and Energy: Safety, Compliance, and Infrastructure Intelligence

Custom AI addresses three high-stakes operational needs across distributed energy infrastructure:

- Real-time safety compliance monitoring at scale

- Anomaly detection in pipeline and drilling operations

- Automated regulatory reporting with full audit trails

The financial and safety exposure is significant. Unplanned downtime costs the industrial and manufacturing sector over a trillion dollars annually in lost revenue. Equipment failure represents 48% of liquids pipeline incidents over a 5-year period, with corrosion failures accounting for another 18%.

When regulators or insurers ask how a decision was made, the answer needs to be traceable and not a black box. That requirement alone rules out generic AI tools for most operators in this sector.

Retail and Supply Chain: Demand Forecasting and Operational Visibility

Custom AI models analyzing a retailer's proprietary sales history, supplier data, and seasonal patterns deliver more accurate demand forecasts than generic forecasting tools.

Gartner predicts 70% of large organizations will adopt AI-based forecasting by 2030. McKinsey reports AI-powered demand forecasting demonstrates a 10–20% reduction in forecasting error in retail environments. Fewer forecasting errors mean less overstock, fewer stockouts, and tighter supplier coordination, each one a direct cost reduction.

Build vs. Buy: When Custom AI Development Makes Business Sense

The build-vs-buy decision comes down to fit. Off-the-shelf AI tools work for straightforward, low-stakes functions. Custom development becomes the right call when your workflows, data, or compliance environment are too specific for a generic solution to address.

Off-the-shelf tools may be appropriate for:

- Early-stage companies testing AI concepts

- Low-complexity functions like basic email drafting

- Standardized processes with minimal customization needs

Custom development makes sense when you:

- Operate with legacy systems requiring deep integration

- Face strict regulatory requirements (HIPAA, GDPR, industry-specific standards)

- Process proprietary data that cannot be exposed to third-party models

- Need AI that scales with operational complexity

- Require audit trails and role-based access controls

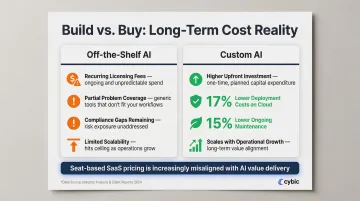

Total Cost of Ownership

Custom AI development carries higher upfront costs than licensing an off-the-shelf tool. Over time, that investment typically outperforms the alternative: recurring fees for multiple generic tools that cover only part of the problem, while compliance risk and operational gaps remain unaddressed.

The numbers support this direction. A Forrester study found that deploying AI on Azure cloud infrastructure results in 17% lower deployment costs and 15% lower ongoing maintenance costs compared to on-premises infrastructure.

The licensing model itself is also shifting. IDC predicts that by 2028, 70% of software vendors will refactor pricing around consumption or outcomes due to AI agent automation. Seat-based SaaS pricing, the dominant model today is increasingly misaligned with the way AI actually delivers value.

Governance, Security, and Responsible AI by Design

Governance cannot be added after deployment. It must be embedded into AI architecture from day one: role-based access controls, encrypted data handling, auditability of AI-driven decisions, and strict policies ensuring proprietary enterprise data is never used to train external models.

Organizations in regulated industries face legal and reputational risk if these controls are absent.

Components of a Responsible AI Framework

A responsible AI framework in a B2B context requires:

- Transparency in how decisions are made

- Traceability of AI actions across workflows

- Continuous monitoring to detect model drift or unexpected outputs

- Role-based access controls to restrict system access by function and seniority

- Encrypted data protection in transit and at rest

- Audit trails documenting all AI-driven actions for compliance verification

The EU AI Act's general application begins August 2, 2026, establishing legal requirements for high-risk AI systems. The NIST AI RMF 1.0, released January 2023, provides foundational risk management guidelines organized into four core functions: GOVERN, MAP, MEASURE, and MANAGE.

The global average cost of a data breach is $4.4 million, and 63% of organizations lack formal AI governance frameworks. That gap represents direct exposure on financial, legal, and operational. Building governance into the architecture from the start is what separates AI systems that scale responsibly from those that create liability.

Conclusion

Custom AI development is not a luxury reserved for the largest enterprises. It is the practical response to operational environments that generic tooling was never designed to handle: fragmented legacy infrastructure, proprietary data that cannot leave the organization, industry-specific compliance requirements, and workflows too complex for horizontal platforms to address.

The case is consistent across sectors. In manufacturing, custom models predict failures before they occur. In healthcare, purpose-built systems reduce administrative burden while maintaining HIPAA alignment. In energy and retail, AI delivers real-time operational intelligence that directly reduces cost and risk exposure. Across all of these, governance embedded from the architecture level is what makes the difference between a system that scales and one that creates liability.

The build-vs-buy question is ultimately a question about fit. When your workflows, data, and compliance requirements are specific enough that no off-the-shelf tool addresses all three, custom development is not just the better option - it is the only viable one.

Looking ahead, the trajectory is clear. As AI-based forecasting becomes standard across large organizations, as the EU AI Act and similar frameworks raise the legal bar for high-risk AI systems, and as seat-based SaaS pricing gives way to consumption and outcome models, the structural advantages of custom AI will compound. Organizations that invest now in purpose-built, governed AI systems will not just operate more efficiently - they will carry a durable competitive advantage into an environment where AI capability increasingly defines the ceiling for what any B2B enterprise can achieve.

Frequently Asked Questions

What is the difference between custom AI development and off-the-shelf AI tools?

Off-the-shelf AI tools are built for broad, horizontal use cases and require businesses to adapt their workflows to fit the platform. Custom AI development engineers systems around an organization's specific operations, data environment, and compliance requirements. The result is deeper integration, higher accuracy on proprietary data, and governance controls that are embedded from the start rather than bolted on afterward.

Which B2B industries benefit most from custom AI?

Custom AI delivers measurable impact across manufacturing (predictive maintenance and production optimization), healthcare (clinical workflow automation and HIPAA-aligned data governance), oil and gas (safety compliance monitoring and anomaly detection), and retail and supply chain (demand forecasting and inventory optimization). Any industry operating with proprietary data, strict regulatory requirements, or multi-step workflows that generic platforms cannot replicate is a strong candidate for custom development.

How does the custom AI development process work?

Custom AI development begins with a detailed assessment of existing infrastructure, data sources, compliance requirements, and workflow complexity. From there, the solution is architected to integrate with systems already in use - ERPs, CRMs, legacy databases - with governance controls embedded at the architectural level. Development proceeds iteratively, with models trained on proprietary data and validated against operational outcomes before full deployment.

What is the ROI timeline for custom AI, and how does it compare to off-the-shelf tools?

Custom AI carries higher upfront development costs than licensing a generic platform. Over time, the investment typically outperforms the alternative: off-the-shelf tools require multiple licenses to cover partial use cases while compliance gaps and integration costs accumulate separately. Custom models also improve continuously as they ingest more operational data, which means ROI compounds over time rather than plateauing at the tool's built-in feature ceiling.

How is AI governance and security handled in a custom AI system?

Governance must be embedded at the architecture level from day one - not added after deployment. A responsible custom AI framework includes role-based access controls, encrypted data handling in transit and at rest, full audit trails of AI-driven actions, and strict policies ensuring proprietary enterprise data is never used to train external models. This approach satisfies requirements under frameworks like the EU AI Act and NIST AI RMF 1.0, and provides the traceability that regulated industries require when AI-driven decisions are subject to audit.